|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

大型语言模型 (LLM) 已成为现代人工智能应用程序不可或缺的一部分,为聊天机器人和代码生成器等工具提供支持。然而,对这些模型的日益依赖揭示了推理过程中严重的低效率问题。 FlashAttention 和 SparseAttention 等注意力机制经常会遇到不同的工作负载、动态输入模式和 GPU 资源限制。这些挑战,再加上高延迟和内存瓶颈,强调需要更高效、更灵活的解决方案来支持可扩展和响应灵敏的 LLM 推理。

Large Language Models (LLMs) have become ubiquitous in modern AI applications, powering tools ranging from chatbots to code generators. However, increased reliance on LLMs has highlighted critical inefficiencies in inference processes. Attention mechanisms, such as FlashAttention and SparseAttention, often encounter challenges with diverse workloads, dynamic input patterns, and GPU resource limitations. These hurdles, coupled with high latency and memory bottlenecks, underscore the need for a more efficient and flexible solution to support scalable and responsive LLM inference.

大型语言模型 (LLM) 在现代人工智能应用中已变得无处不在,为从聊天机器人到代码生成器等各种工具提供支持。然而,对法学硕士的日益依赖凸显了推理过程中严重的低效率问题。 FlashAttention 和 SparseAttention 等注意力机制经常遇到不同工作负载、动态输入模式和 GPU 资源限制的挑战。这些障碍,再加上高延迟和内存瓶颈,强调需要更高效、更灵活的解决方案来支持可扩展和响应灵敏的 LLM 推理。

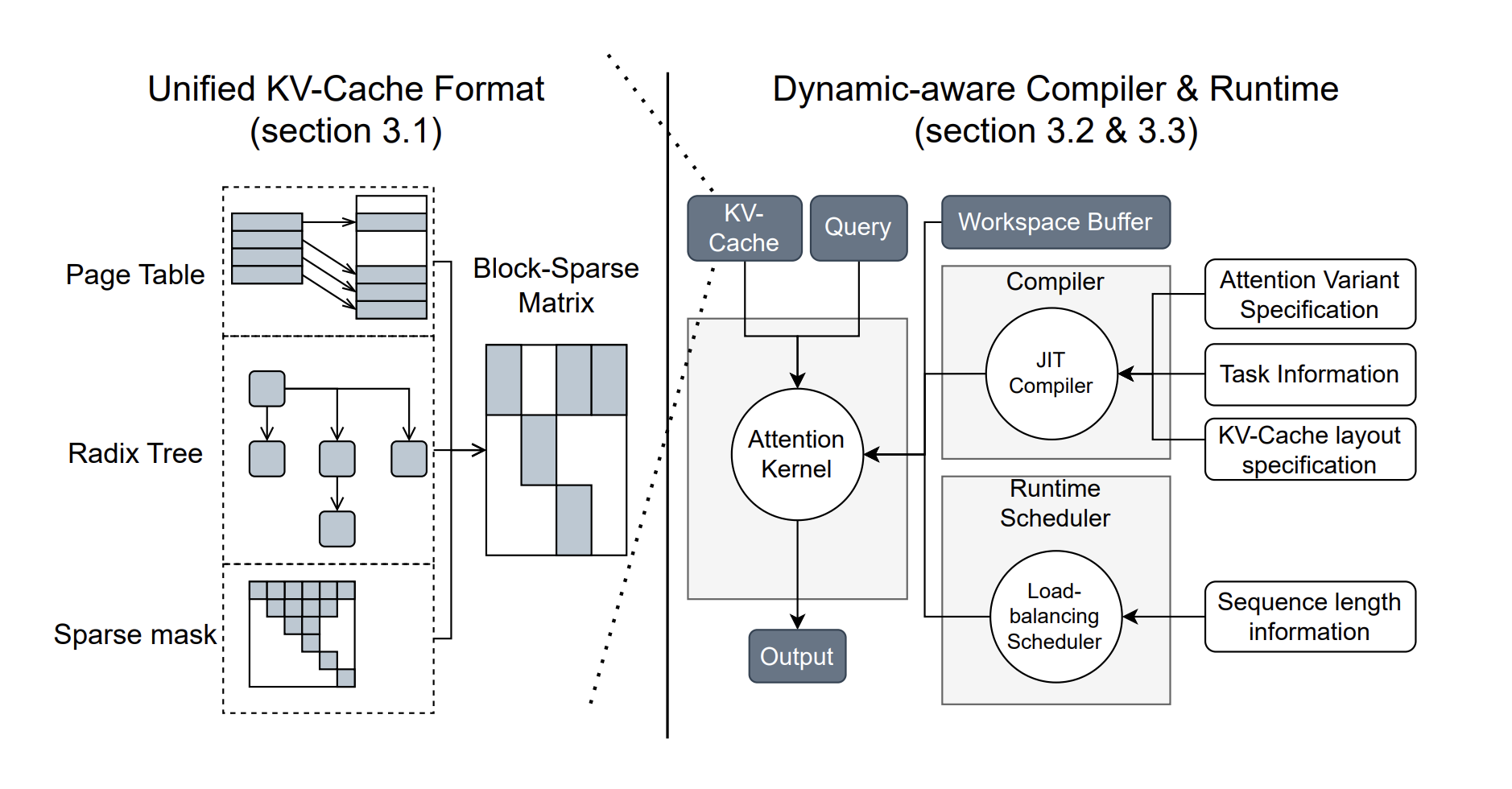

To address these challenges, researchers from the University of Washington, NVIDIA, Perplexity AI, and Carnegie Mellon University have developed FlashInfer, an AI library and kernel generator tailored for LLM inference. FlashInfer provides high-performance GPU kernel implementations for various attention mechanisms, including FlashAttention, SparseAttention, PageAttention, and sampling. Its design prioritizes flexibility and efficiency, addressing key challenges in LLM inference serving.

为了应对这些挑战,来自华盛顿大学、NVIDIA、Perplexity AI 和卡内基梅隆大学的研究人员开发了 FlashInfer,这是一个专为 LLM 推理量身定制的 AI 库和内核生成器。 FlashInfer 为各种注意力机制提供高性能 GPU 内核实现,包括 FlashAttention、SparseAttention、PageAttention 和采样。其设计优先考虑灵活性和效率,解决法学硕士推理服务的关键挑战。

FlashInfer incorporates a block-sparse format to handle heterogeneous KV-cache storage efficiently and employs dynamic, load-balanced scheduling to optimize GPU usage. With integration into popular LLM serving frameworks like SGLang, vLLM, and MLC-Engine, FlashInfer offers a practical and adaptable approach to improving inference performance.

FlashInfer 采用块稀疏格式来有效处理异构 KV 缓存存储,并采用动态、负载平衡调度来优化 GPU 使用。通过集成到 SGLang、vLLM 和 MLC-Engine 等流行的 LLM 服务框架中,FlashInfer 提供了一种实用且适应性强的方法来提高推理性能。

Technical Features and Benefits

技术特点和优点

FlashInfer introduces several technical innovations:

FlashInfer引入了多项技术创新:

Performance Insights

绩效洞察

FlashInfer demonstrates notable performance improvements across various benchmarks:

FlashInfer 在各种基准测试中展示了显着的性能改进:

FlashInfer also excels in parallel decoding tasks, with composable formats enabling significant reductions in Time-To-First-Token (TTFT). For instance, tests on the Llama 3.1 model (70B parameters) show up to a 22.86% decrease in TTFT under specific configurations.

FlashInfer 在并行解码任务方面也表现出色,其可组合格式可显着缩短首次令牌时间 (TTFT)。例如,对 Llama 3.1 模型(70B 参数)的测试显示,在特定配置下 TTFT 降低了 22.86%。

Conclusion

结论

FlashInfer offers a practical and efficient solution to the challenges of LLM inference, providing significant improvements in performance and resource utilization. Its flexible design and integration capabilities make it a valuable tool for advancing LLM-serving frameworks. By addressing key inefficiencies and offering robust technical solutions, FlashInfer paves the way for more accessible and scalable AI applications. As an open-source project, it invites further collaboration and innovation from the research community, ensuring continuous improvement and adaptation to emerging challenges in AI infrastructure.

FlashInfer 为 LLM 推理的挑战提供了实用且高效的解决方案,显着提高了性能和资源利用率。其灵活的设计和集成功能使其成为推进 LLM 服务框架的宝贵工具。通过解决关键的低效率问题并提供强大的技术解决方案,FlashInfer 为更易于访问和扩展的人工智能应用程序铺平了道路。作为一个开源项目,它邀请研究界进一步合作和创新,确保持续改进和适应人工智能基础设施中出现的挑战。

Check out the Paper and GitHub Page. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter and join our Telegram Channel and LinkedIn Group. Don’t Forget to join our 60k+ ML SubReddit.

查看 Paper 和 GitHub 页面。这项研究的所有功劳都归功于该项目的研究人员。另外,不要忘记在 Twitter 上关注我们并加入我们的 Telegram 频道和 LinkedIn 群组。不要忘记加入我们 60k+ ML SubReddit。

🚨 FREE UPCOMING AI WEBINAR (JAN 15, 2025): Boost LLM Accuracy with Synthetic Data and Evaluation Intelligence – Join this webinar to gain actionable insights into boosting LLM model performance and accuracy while safeguarding data privacy.

🚨 即将举行的免费 AI 网络研讨会(2025 年 1 月 15 日):通过综合数据和评估智能提高 LLM 准确性 - 加入此网络研讨会,获得可操作的见解,以提高 LLM 模型的性能和准确性,同时保护数据隐私。

免责声明:info@kdj.com

所提供的信息并非交易建议。根据本文提供的信息进行的任何投资,kdj.com不承担任何责任。加密货币具有高波动性,强烈建议您深入研究后,谨慎投资!

如您认为本网站上使用的内容侵犯了您的版权,请立即联系我们(info@kdj.com),我们将及时删除。

-

- 如何在新加坡购买比特币:初学者指南

- 2025-04-07 11:00:11

- 自2024年初以来,新加坡的所有权激增,最近的一份报告表明,该国至少有26%的成人人口持有数字资产。

-

-

-

- 比特币(BTC)交换余额下降到8年低点,信号收紧了供应并更新机构利益

- 2025-04-07 10:55:11

- 比特币(BTC)的交换余额已在八年来下降到其最低水平,因为区块链数据表明供应收紧并更新了机构利益。

-

- XRP表现的奇怪案例

- 2025-04-07 10:50:12

- 唐纳德·特朗普(Donald Trump)的11月连任确保,在1月20日,美国历史上最适合加密货币友好的候选人就职

-

- Ozak AI Presale Persive取得了动力

- 2025-04-07 10:50:12

- Ozak AI的愿景非常清楚,因为它旨在成为企业分散AI技术的主要提供商之一,以及针对各种行业的实时预测建模

-

- 特朗普注册的模因硬币表现出强烈的看涨潜力,有可能发生10倍集会

- 2025-04-07 10:45:12

- 这种认可发生在几天前,引发了新的投资者的兴趣,导致了市场反弹。

-

-