|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

大型語言模型 (LLM) 已成為現代人工智慧應用程式不可或缺的一部分,為聊天機器人和程式碼產生器等工具提供支援。然而,對這些模型的日益依賴揭示了推理過程中嚴重的低效率問題。 FlashAttention 和 SparseAttention 等注意力機制經常會遇到不同的工作負載、動態輸入模式和 GPU 資源限制。這些挑戰,再加上高延遲和記憶體瓶頸,強調需要更有效率、更靈活的解決方案來支援可擴展和響應靈敏的 LLM 推理。

Large Language Models (LLMs) have become ubiquitous in modern AI applications, powering tools ranging from chatbots to code generators. However, increased reliance on LLMs has highlighted critical inefficiencies in inference processes. Attention mechanisms, such as FlashAttention and SparseAttention, often encounter challenges with diverse workloads, dynamic input patterns, and GPU resource limitations. These hurdles, coupled with high latency and memory bottlenecks, underscore the need for a more efficient and flexible solution to support scalable and responsive LLM inference.

大型語言模型 (LLM) 在現代人工智慧應用中已變得無所不在,為從聊天機器人到程式碼產生器等各種工具提供支援。然而,對法學碩士的日益依賴凸顯了推理過程中嚴重的低效率問題。 FlashAttention 和 SparseAttention 等注意力機制經常遇到不同工作負載、動態輸入模式和 GPU 資源限制的挑戰。這些障礙,再加上高延遲和記憶體瓶頸,強調需要更有效率、更靈活的解決方案來支援可擴展和響應靈敏的 LLM 推理。

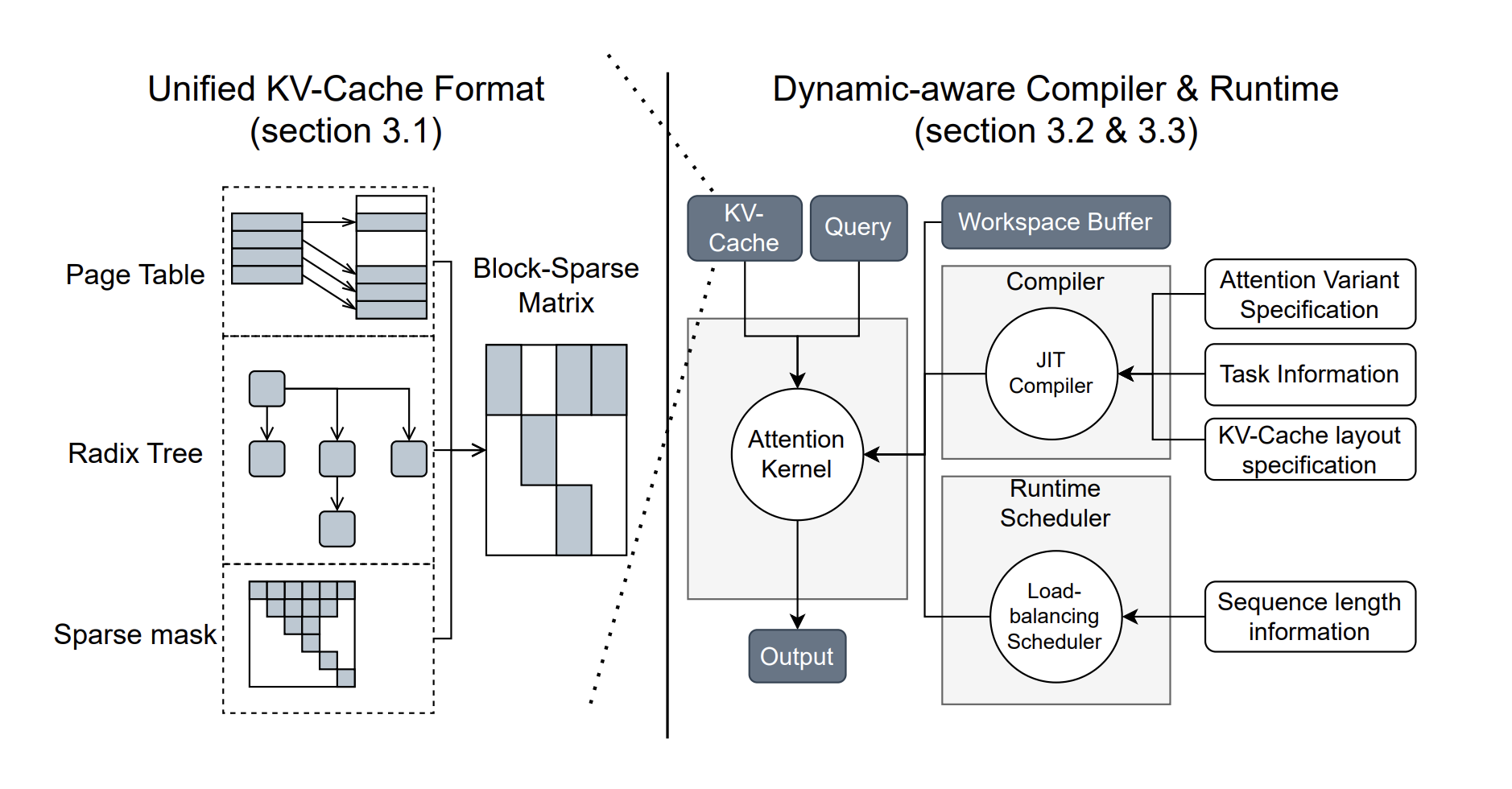

To address these challenges, researchers from the University of Washington, NVIDIA, Perplexity AI, and Carnegie Mellon University have developed FlashInfer, an AI library and kernel generator tailored for LLM inference. FlashInfer provides high-performance GPU kernel implementations for various attention mechanisms, including FlashAttention, SparseAttention, PageAttention, and sampling. Its design prioritizes flexibility and efficiency, addressing key challenges in LLM inference serving.

為了應對這些挑戰,來自華盛頓大學、NVIDIA、Perplexity AI 和卡內基美隆大學的研究人員開發了 FlashInfer,這是一個專為 LLM 推理量身定制的 AI 庫和內核生成器。 FlashInfer 為各種注意力機制提供高效能 GPU 核心實現,包括 FlashAttention、SparseAttention、PageAttention 和取樣。其設計優先考慮靈活性和效率,解決法學碩士推理服務的關鍵挑戰。

FlashInfer incorporates a block-sparse format to handle heterogeneous KV-cache storage efficiently and employs dynamic, load-balanced scheduling to optimize GPU usage. With integration into popular LLM serving frameworks like SGLang, vLLM, and MLC-Engine, FlashInfer offers a practical and adaptable approach to improving inference performance.

FlashInfer 採用塊稀疏格式來有效處理異質 KV 快取存儲,並採用動態、負載平衡調度來優化 GPU 使用。透過整合到 SGLang、vLLM 和 MLC-Engine 等流行的 LLM 服務框架中,FlashInfer 提供了一種實用且適應性強的方法來提高推理性能。

Technical Features and Benefits

技術特點和優點

FlashInfer introduces several technical innovations:

FlashInfer引入了多項技術創新:

Performance Insights

績效洞察

FlashInfer demonstrates notable performance improvements across various benchmarks:

FlashInfer 在各種基準測試中展示了顯著的效能改進:

FlashInfer also excels in parallel decoding tasks, with composable formats enabling significant reductions in Time-To-First-Token (TTFT). For instance, tests on the Llama 3.1 model (70B parameters) show up to a 22.86% decrease in TTFT under specific configurations.

FlashInfer 在平行解碼任務方面也表現出色,其可組合格式可大幅縮短首次令牌時間 (TTFT)。例如,對 Llama 3.1 模型(70B 參數)的測試顯示,在特定配置下 TTFT 降低了 22.86%。

Conclusion

結論

FlashInfer offers a practical and efficient solution to the challenges of LLM inference, providing significant improvements in performance and resource utilization. Its flexible design and integration capabilities make it a valuable tool for advancing LLM-serving frameworks. By addressing key inefficiencies and offering robust technical solutions, FlashInfer paves the way for more accessible and scalable AI applications. As an open-source project, it invites further collaboration and innovation from the research community, ensuring continuous improvement and adaptation to emerging challenges in AI infrastructure.

FlashInfer 為 LLM 推理的挑戰提供了實用且高效的解決方案,顯著提高了效能和資源利用率。其靈活的設計和整合功能使其成為推進 LLM 服務框架的寶貴工具。透過解決關鍵的低效率問題並提供強大的技術解決方案,FlashInfer 為更易於存取和擴展的人工智慧應用程式鋪平了道路。作為一個開源項目,它邀請研究界進一步合作和創新,確保持續改進和適應人工智慧基礎設施中出現的挑戰。

Check out the Paper and GitHub Page. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter and join our Telegram Channel and LinkedIn Group. Don’t Forget to join our 60k+ ML SubReddit.

請參閱 Paper 和 GitHub 頁面。這項研究的所有功勞都歸功於該計畫的研究人員。另外,不要忘記在 Twitter 上關注我們並加入我們的 Telegram 頻道和 LinkedIn 群組。不要忘記加入我們 60k+ ML SubReddit。

🚨 FREE UPCOMING AI WEBINAR (JAN 15, 2025): Boost LLM Accuracy with Synthetic Data and Evaluation Intelligence – Join this webinar to gain actionable insights into boosting LLM model performance and accuracy while safeguarding data privacy.

🚨 即將舉行的免費AI 網路研討會(2025 年1 月15 日):透過綜合數據和評估智慧提高LLM 準確性- 加入此網路研討會,獲得可操作的見解,以提高LLM 模型的性能和準確性,同時保護資料隱私。

免責聲明:info@kdj.com

所提供的資訊並非交易建議。 kDJ.com對任何基於本文提供的資訊進行的投資不承擔任何責任。加密貨幣波動性較大,建議您充分研究後謹慎投資!

如果您認為本網站使用的內容侵犯了您的版權,請立即聯絡我們(info@kdj.com),我們將及時刪除。

-

-

-

-

-

-

- 證明該行業取得成功的託管,集中式Altcoin賭場並不是唯一的途徑

- 2025-04-09 22:25:12

- 投入:證明該行業成功的持有,集中的山寨幣賭場並不是唯一成功的方法

-

- Zenusdt-M永久期貨擴大了Bitget的廣泛未來產品

- 2025-04-09 22:20:13

- Zenusdt-M永久期貨在USDT中定居下來,提供0.001的滴答性,並提供資金費用

-

-

- 官方Dogecoin Reserve的推出在加密貨幣社區引起了很大的轟動

- 2025-04-09 22:15:15

- 官方Dogecoin儲備的發射在加密貨幣社區引起了很大的轟動,指出了數字貨幣的新發展