|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

研究人員在使用隱式和顯式函數對法學碩士進行微調後,開發了推理時間對齊方法來整合人類價值觀,而無需更改基礎模型。

Integrating human values after training a model with Learning-based algorithms requires fine-tuning LLMs, which is computationally expensive and time-consuming. Moreover, it generates biased and undesirable responses by the user. A model that can efficiently adapt to user preferences in real time by integrating algorithms that can interfere at inference time is needed. This method will avoid retraining the models repeatedly for desired results by freezing the base model and reducing the computational cost of fine-tuning LLMs.

在使用基於學習的演算法訓練模型後整合人類價值觀需要對 LLM 進行微調,這在計算上是昂貴且耗時的。此外,它還會引起用戶的偏見和不良反應。我們需要一個能夠透過整合可在推理時進行幹擾的演算法來有效地即時適應用戶偏好的模型。此方法將透過凍結基礎模型並減少微調 LLM 的計算成本來避免重複重新訓練模型以獲得所需結果。

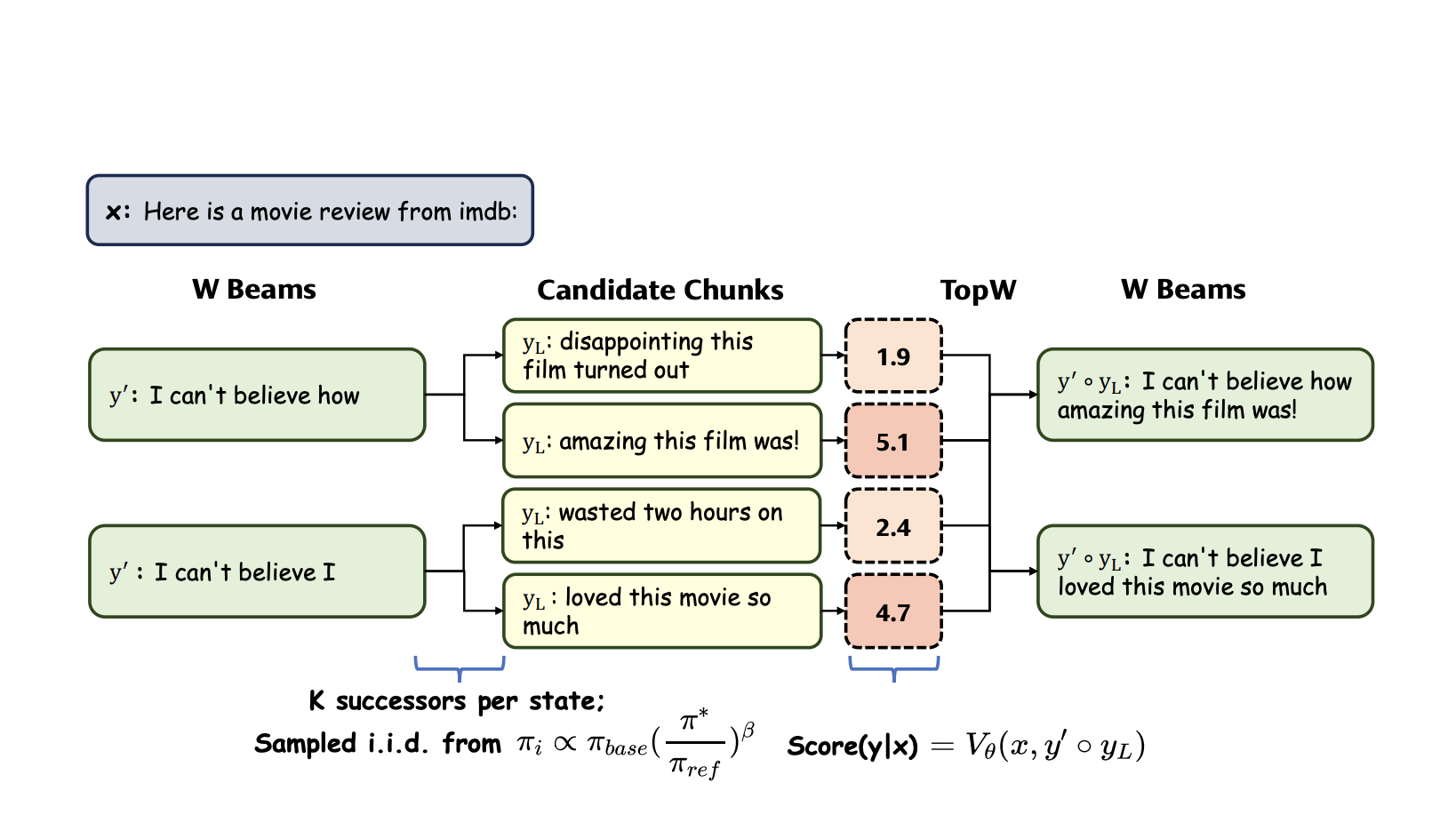

Researchers developed Inference-time alignment methods to integrate human values after fine-tuning LLMs using the implicit and explicit functions without changing the base model. Implicit functions are used for token generation, which conducts word-by-word evaluations and prefers the output with the highest probability. In contrast, explicit functions require a rigid structure to evaluate larger chunks of text and generate the following sequence of words with the highest probability while maintaining overall context. The explicit function is inflexible and computationally expensive, failing to address token-level optimization, while the implicit function faces interpretability issues and requires frequent forward passes, leading to low real-time efficiency.

研究人員在使用隱式和顯式函數對法學碩士進行微調後,開發了推理時間對齊方法來整合人類價值觀,而無需更改基礎模型。隱式函數用於標記生成,逐字評估並優先選擇機率最高的輸出。相較之下,顯式函數需要嚴格的結構來評估較大的文字區塊,並以最高的機率產生以下單字序列,同時保持整體上下文。顯式函數不靈活且計算量大,無法解決token等級的最佳化,而隱式函數則面臨可解釋性問題,需要頻繁的前向傳遞,導致即時效率較低。

To tackle the disadvantages of both functions, the proposed method, Integrated Value Guidance (IVG), combines the implicit function’s token-level optimization and the explicit function’s broader perspective. It was able to ward off adaptation challenges and trade-offs in alignment efficacy, leading to decreased performance discrepancies and making it easier to implement. These advantages facilitated better performance on tasks like controlled sentiment generation and summarization. IVG, combined with the smaller models like GPT-2, could compete with higher models.

為了解決這兩種函數的缺點,所提出的方法整合價值指導(IVG)結合了隱式函數的代幣級最佳化和顯式函數的更廣泛的視角。它能夠避免適應挑戰和對齊效率的權衡,從而減少效能差異並使其更容易實施。這些優勢有助於更好地執行受控情緒生成和摘要等任務。 IVG 與 GPT-2 等較小的模型相結合,可以與更高的模型競爭。

IVG incorporates the two value functions, the implicit and explicit functions, to align the model with human values. First, token-wise sampling fine-tunes individual tokens to a specific sequence length, generating multiple sequences. Then, chunk-level beam search compares the probabilities of these sequences and selects the one with the highest probability. Although this method ensures that the output is more robust, the computational power increases during the inference time due to frequent forward passes, leading to slower responses.

IVG 結合了隱式函數和顯式函數這兩個價值函數,使模型與人類價值保持一致。首先,按標記取樣將各個標記微調到特定的序列長度,產生多個序列。然後,區塊級波束搜尋比較這些序列的機率並選擇機率最高的一個。雖然這種方法確保了輸出更加魯棒,但由於頻繁的前向傳遞,計算能力在推理時間內增加,導致反應速度變慢。

Researchers have used two experimental set-ups to evaluate IVG: 1. Controlled sentiment generation and Summarization, and 2. Instruction-following. In the first one, the GPT-2 model family is used by leveraging synthetic datasets from a gold-reward model to generate positive movie reviews and summarise Reddit posts. In comparison, the second one requires an instruction-tuned model, AlpacaEval 2.0. It employs Tulu Guidance, which uses specific models for implicit function and trains a reward-based model for the explicit function, and Ultraguidance, which fine-tunes a model with Direct Preference Optimization (DPO) for both functions. GPT-4-turbo was used as a reference to assess responses in the second experiment, and IVG consistently performed well.

研究人員使用兩種實驗設定來評估 IVG:1. 受控情緒生成和總結,2. 遵循指令。在第一個模型中,GPT-2 模型系列透過利用黃金獎勵模型的合成資料集來產生正面的電影評論並總結 Reddit 貼文。相較之下,第二個需要指令調整模型 AlpacaEval 2.0。它採用了 Tulu Guidance,它使用隱式函數的特定模型,並為顯式函數訓練基於獎勵的模型,以及 Ultraguidance,它透過直接偏好優化 (DPO) 對這兩個函數的模型進行微調。 GPT-4-turbo 被用作評估第二個實驗中反應的參考,IVG 始終表現良好。

In addition to these two experiments, an ablation study proved that Chunk-Level Beam Search (CBS) had higher speed efficiency than Emulator Fine-Tuning (EFT), which uses the implicit function for fine-tuning. These results have proved that CBS is much better to use in practice.

除了這兩個實驗之外,一項消融研究證明,區塊級波束搜尋(CBS)比使用隱式函數進行微調的模擬器微調(EFT)具有更高的速度效率。這些結果證明CBS在實踐中使用起來會好得多。

In conclusion, Integrated Value Guidance (IVG) offers a novel and efficient approach to aligning large language models with human preferences purely at inference time, bypassing the complexities of traditional fine-tuning. By leveraging implicit and explicit value functions, IVG enhances performance in both token-wise sampling and chunk-level decoding, as demonstrated through significant improvements in sentiment generation, summarization, and instruction-following tasks. The results showed that IVG is a versatile method, providing strong empirical evidence of its ability to outclass existing approaches, making it a promising solution for fine-tuning large models in real-world applications.

總之,綜合價值指導(IVG)提供了一種新穎而有效的方法,可以純粹在推理時將大型語言模型與人類偏好結合起來,從而繞過傳統微調的複雜性。透過利用隱式和顯式價值函數,IVG 增強了 token-wise 採樣和區塊級解碼的效能,正如情緒生成、摘要和指令追蹤任務的顯著改進所證明的那樣。結果表明,IVG 是一種多功能方法,提供了強有力的經驗證據,證明其超越現有方法的能力,使其成為在現實應用中微調大型模型的有前途的解決方案。

Don’t Forget to join our 50k+ ML SubReddit

不要忘記加入我們超過 50k 的 ML SubReddit

Want to get in front of 1 Million+ AI Readers? Work with us here

想要面對超過 100 萬人工智慧讀者嗎?在這裡與我們一起工作

免責聲明:info@kdj.com

所提供的資訊並非交易建議。 kDJ.com對任何基於本文提供的資訊進行的投資不承擔任何責任。加密貨幣波動性較大,建議您充分研究後謹慎投資!

如果您認為本網站使用的內容侵犯了您的版權,請立即聯絡我們(info@kdj.com),我們將及時刪除。

-

-

-

- 柴犬 (SHIB) 躋身鯨魚青睞的山寨幣行列,交易數量激增 360%

- 2024-10-03 16:25:02

- 過去一周,鏈上數據顯示鯨魚對柴犬的興趣顯著增加,使這種山寨幣成為這些大型投資者最青睞的貨幣之一。

-

-

-

-

-

-