|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

Cryptocurrency News Articles

IVG: Integrating Human Values into Large Language Models at Inference Time

Oct 03, 2024 at 01:37 pm

Researchers developed Inference-time alignment methods to integrate human values after fine-tuning LLMs using the implicit and explicit functions without changing the base model.

Integrating human values after training a model with Learning-based algorithms requires fine-tuning LLMs, which is computationally expensive and time-consuming. Moreover, it generates biased and undesirable responses by the user. A model that can efficiently adapt to user preferences in real time by integrating algorithms that can interfere at inference time is needed. This method will avoid retraining the models repeatedly for desired results by freezing the base model and reducing the computational cost of fine-tuning LLMs.

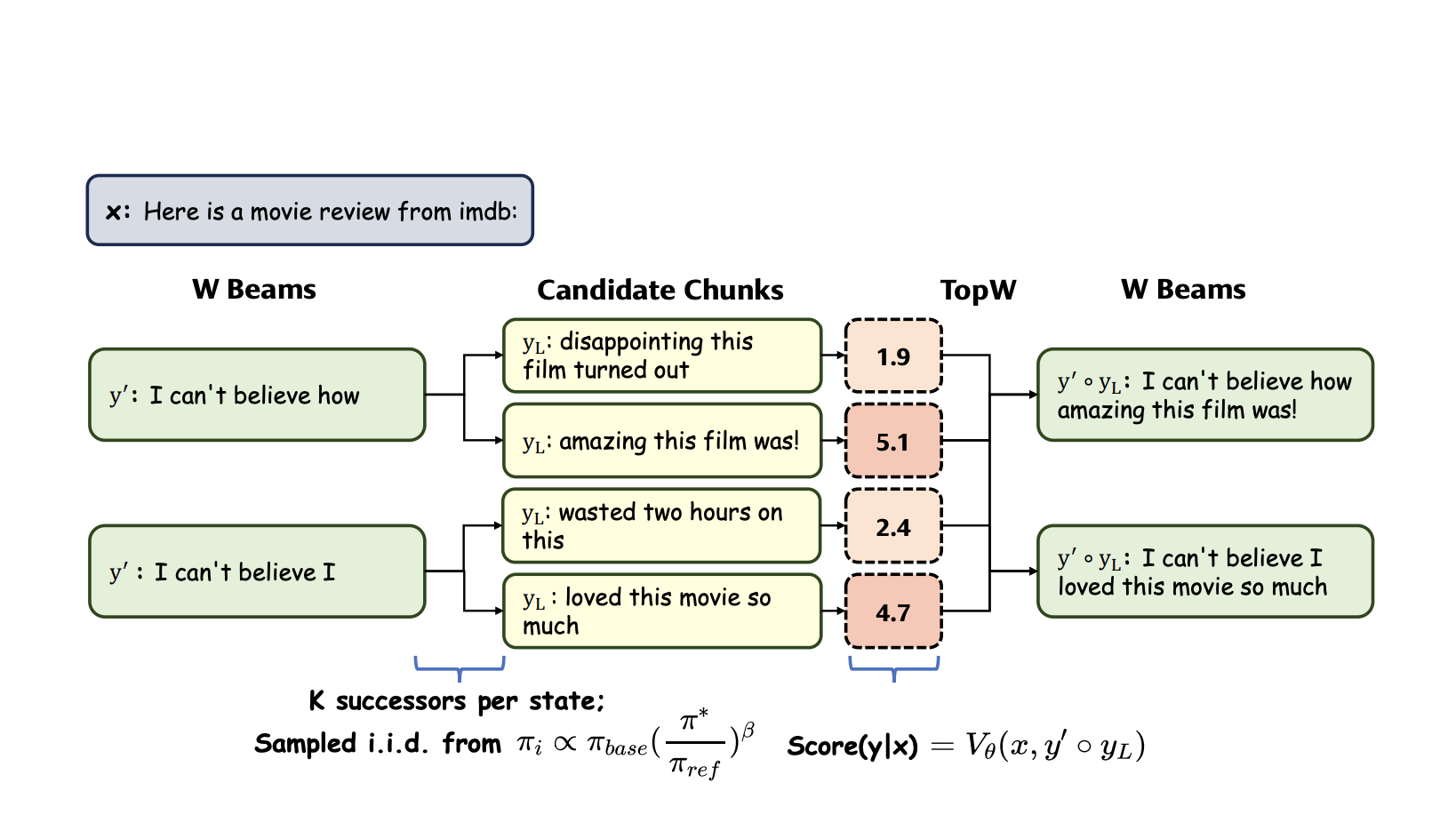

Researchers developed Inference-time alignment methods to integrate human values after fine-tuning LLMs using the implicit and explicit functions without changing the base model. Implicit functions are used for token generation, which conducts word-by-word evaluations and prefers the output with the highest probability. In contrast, explicit functions require a rigid structure to evaluate larger chunks of text and generate the following sequence of words with the highest probability while maintaining overall context. The explicit function is inflexible and computationally expensive, failing to address token-level optimization, while the implicit function faces interpretability issues and requires frequent forward passes, leading to low real-time efficiency.

To tackle the disadvantages of both functions, the proposed method, Integrated Value Guidance (IVG), combines the implicit function’s token-level optimization and the explicit function’s broader perspective. It was able to ward off adaptation challenges and trade-offs in alignment efficacy, leading to decreased performance discrepancies and making it easier to implement. These advantages facilitated better performance on tasks like controlled sentiment generation and summarization. IVG, combined with the smaller models like GPT-2, could compete with higher models.

IVG incorporates the two value functions, the implicit and explicit functions, to align the model with human values. First, token-wise sampling fine-tunes individual tokens to a specific sequence length, generating multiple sequences. Then, chunk-level beam search compares the probabilities of these sequences and selects the one with the highest probability. Although this method ensures that the output is more robust, the computational power increases during the inference time due to frequent forward passes, leading to slower responses.

Researchers have used two experimental set-ups to evaluate IVG: 1. Controlled sentiment generation and Summarization, and 2. Instruction-following. In the first one, the GPT-2 model family is used by leveraging synthetic datasets from a gold-reward model to generate positive movie reviews and summarise Reddit posts. In comparison, the second one requires an instruction-tuned model, AlpacaEval 2.0. It employs Tulu Guidance, which uses specific models for implicit function and trains a reward-based model for the explicit function, and Ultraguidance, which fine-tunes a model with Direct Preference Optimization (DPO) for both functions. GPT-4-turbo was used as a reference to assess responses in the second experiment, and IVG consistently performed well.

In addition to these two experiments, an ablation study proved that Chunk-Level Beam Search (CBS) had higher speed efficiency than Emulator Fine-Tuning (EFT), which uses the implicit function for fine-tuning. These results have proved that CBS is much better to use in practice.

In conclusion, Integrated Value Guidance (IVG) offers a novel and efficient approach to aligning large language models with human preferences purely at inference time, bypassing the complexities of traditional fine-tuning. By leveraging implicit and explicit value functions, IVG enhances performance in both token-wise sampling and chunk-level decoding, as demonstrated through significant improvements in sentiment generation, summarization, and instruction-following tasks. The results showed that IVG is a versatile method, providing strong empirical evidence of its ability to outclass existing approaches, making it a promising solution for fine-tuning large models in real-world applications.

Don’t Forget to join our 50k+ ML SubReddit

Want to get in front of 1 Million+ AI Readers? Work with us here

Disclaimer:info@kdj.com

The information provided is not trading advice. kdj.com does not assume any responsibility for any investments made based on the information provided in this article. Cryptocurrencies are highly volatile and it is highly recommended that you invest with caution after thorough research!

If you believe that the content used on this website infringes your copyright, please contact us immediately (info@kdj.com) and we will delete it promptly.

-

- Artemis Coin: A Revolutionary Presale Crypto Project Poised to Reshape Decentralized Finance

- Oct 03, 2024 at 04:25 pm

- In the dynamic realm of cryptocurrencies, a new contender has emerged, captivating investors and enthusiasts alike with its groundbreaking vision and innovative approach. Artemis Coin, a cutting-edge digital asset, has taken the crypto world by storm, positioning itself as a promising presale crypto project with the potential to reshape the landscape of decentralized finance.

-

-

-

-

-

-

- Swyftx Analysts Dive into Recent Crypto Market Movements, Reveal the Factors Driving the Pump in the Memecoin Sector and Other Top-Gainers This Week

- Oct 03, 2024 at 04:25 pm

- Swyftx’s analysts have dove into recent movements in the crypto market, revealing the factors driving the recent pump in the memecoin sector and other

-

- Check your change! Rare 20p coin from 2008 sells for £780 on eBay - 390 times its face value

- Oct 03, 2024 at 04:25 pm

- We are all being encouraged to check our change after a rare 20p coin sold for 390 times its face value this week. The 20p piece is a “mule” coin dating from 2008 and was minted without a date on the coin due to an error.

-