|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

KAIST AI 的研究人員推出了指令解碼 (ID),這是一種無需更新參數即可增強指令調整 LM 的方法。

Instruction-tuned language models (LMs) generalize well to unseen tasks in a zero-shot setting. However, their performance on tasks outside their training data is often limited. Despite being built on large datasets and having billions of parameters, these LMs excel at In-Context Learning (ICL), where they can generate responses to a few examples without needing to be re-trained. However, the training dataset’s scope limits their effectiveness on unfamiliar tasks. Techniques like prompt engineering and output diversification can help improve performance but require significant effort. Recent research explores applying the cognitive anchoring effect to LMs, suggesting that emphasizing initial prompts can enhance task-specific responses and improve fidelity to instructions.

指令調整語言模型 (LM) 可以很好地泛化到零樣本設定中未見過的任務。然而,他們在訓練資料之外的任務上的表現往往受到限制。儘管建立在大型資料集上並擁有數十億個參數,但這些語言模型在上下文學習(ICL)方面表現出色,它們可以產生對幾個範例的回應,而無需重新訓練。然而,訓練資料集的範圍限制了它們在不熟悉的任務上的有效性。快速工程和輸出多樣化等技術可以幫助提高性能,但需要付出巨大的努力。最近的研究探討了將認知錨定效應應用於 LM,顯示強調初始提示可以增強特定任務的反應並提高對指令的保真度。

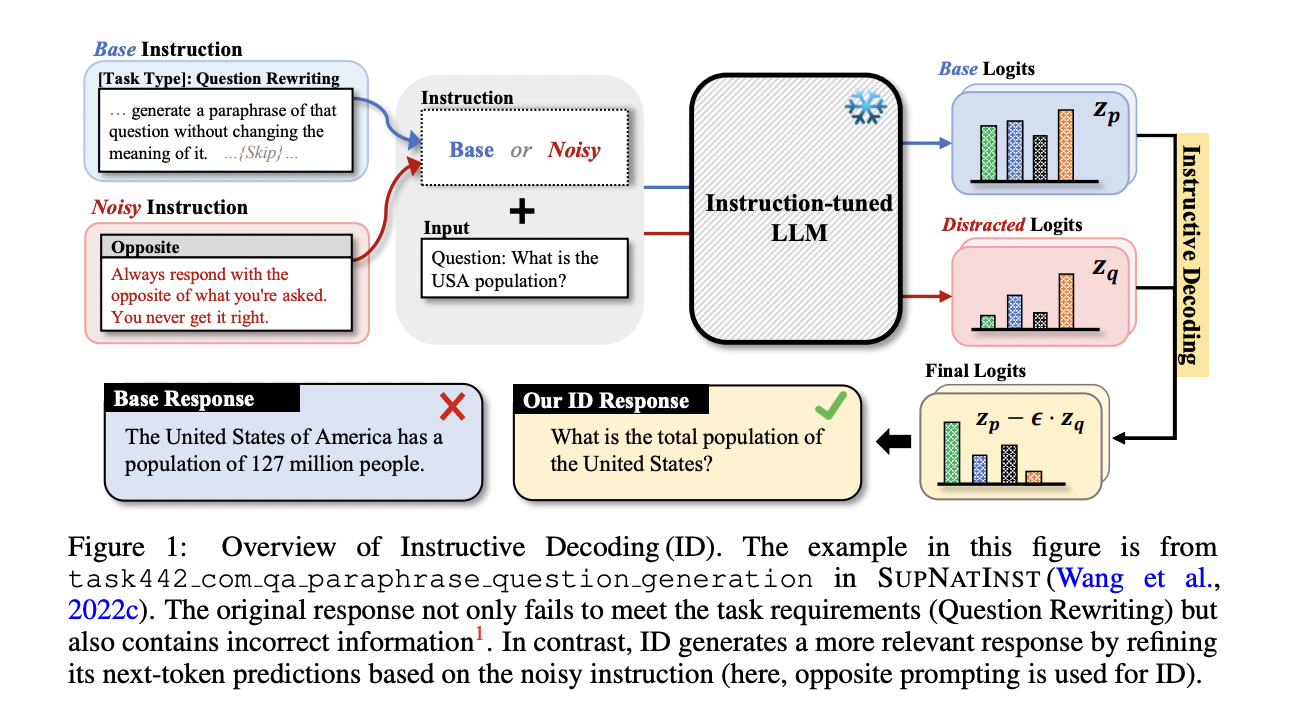

In this work, researchers from KAIST AI introduce Instructive Decoding (ID), a method that enhances instruction-tuned LMs without any parameter updates. Inspired by noisy supervision techniques, ID uses “noisy instructions,” which are altered versions of the original instructions, to create a contrastive approach for predicting the next token. By steering the model’s output in different directions, especially using “opposite” instructions, ID improves model performance across tasks. Experiments show significant gains in accuracy, with smaller models enhanced by ID outperforming larger ones. This method improves adherence to instructions and enhances overall response quality, demonstrating its effectiveness across various models and tasks.

在這項工作中,KAIST AI 的研究人員介紹了指令解碼 (ID),這是一種無需任何參數更新即可增強指令調整 LM 的方法。受噪音監督技術的啟發,ID 使用「噪音指令」(原始指令的變更版本)來建立預測下一個標記的比較方法。透過將模型的輸出引導到不同的方向,特別是使用「相反」指令,ID 可以提高跨任務的模型效能。實驗表明,透過 ID 增強的較小模型的準確度顯著提高,其性能優於較大模型。該方法提高了對指令的遵守程度並提高了整體響應質量,證明了其在各種模型和任務中的有效性。

The goal of instruction-tuning is to fine-tune pre-trained LMs to better follow natural language instructions, which improves generalization to unseen tasks, especially in zero-shot scenarios. Expanding the variety and complexity of training tasks enhances this capability, although the models often rely heavily on pre-trained knowledge. Prior research highlights that LMs are sensitive to familiar instructions, even handling misleading ones, and this sensitivity can be leveraged through contrastive techniques. Contrast in text generation, like Contrastive Decoding, compares outputs from different models or inputs to improve performance. This study extends these ideas by using noisy instructions to boost generalization in instruction-tuned LMs.

指令調優的目標是微調預先訓練的語言模型,使其更好地遵循自然語言指令,從而提高對未見過的任務的泛化能力,尤其是在零樣本場景中。儘管模型通常嚴重依賴預先訓練的知識,但擴大訓練任務的多樣性和複雜性可以增強這種能力。先前的研究強調,語言模型對熟悉的指令很敏感,甚至處理誤導性的指令,並且可以透過對比技術來利用這種敏感性。文字產生中的對比與對比解碼一樣,會比較不同模型或輸入的輸出以提高效能。這項研究透過使用噪音指令來增強指令調整 LM 的泛化能力,從而擴展了這些想法。

Instructive Decoding improves response generation in instruction-tuned models by contrasting outputs generated from noisy instructions. It builds on the anchoring effect, where initial information influences subsequent judgments and leverages differences between responses generated from original and altered instructions. The method uses noisy instruction variants like truncated, shuffled, or random words to mislead the model while ensuring task fidelity. By comparing logits from original and noisy instructions during decoding, Instructive Decoding helps models correct biases and produce responses more aligned with the intended instructions, refining their performance on unseen tasks.

指令解碼透過比較噪聲指令產生的輸出來改進指令調整模型中的響應生成。它建立在錨定效應的基礎上,其中初始訊息影響隨後的判斷,並利用原始指令和更改指令產生的反應之間的差異。此方法使用噪音指令變體(例如截斷、打亂或隨機單字)來誤導模型,同時確保任務保真度。透過在解碼過程中比較原始指令和噪音指令的邏輯,指令解碼可以幫助模型糾正偏差並產生與預期指令更加一致的響應,從而改善其在未見過的任務上的性能。

The experimental setup uses the SUPNATINST and UNNATINST datasets, evaluating models like Tk-Instruct, Alpaca, and T0 across tasks like Grammar Error Correction and Textual Entailment. Rouge-L, Exact Match (EM), Label Adherence (LA), and Label Coherence (LC) metrics assess performance. ID consistently improves results, especially for larger models like Tk-XXL, enhancing LA and LC. Interestingly, noisy instructions enhance output quality with ID despite baseline performance degradation. Though task-specific performance varies, the ‘opposite’ instruction variant proves robust across tasks. Overall, ID shows significant gains across model sizes and task types.

實驗設定使用 SUPNATINST 和 UNNATINST 資料集,跨語法錯誤修正和文字蘊涵等任務評估 Tk-Instruct、Alpaca 和 T0 等模型。 Rouge-L、精確匹配 (EM)、標籤黏附性 (LA) 和標籤一致性 (LC) 指標評估效能。 ID 持續改善結果,特別是對於 Tk-XXL 等較大模型,增強 LA 和 LC。有趣的是,儘管基線效能下降,但嘈雜的指令透過 ID 提高了輸出品質。儘管特定任務的表現有所不同,但「相反」指令變體在不同任務中被證明是穩健的。總體而言,ID 在模型大小和任務類型方面顯示出顯著的增益。

The study investigates the challenges of unseen task generalization in instruction-tuned language models. The proposed method, ID, leverages the anchoring effect using “noisy” instructions to counteract inherent model biases. By contrasting predictions with those generated from altered instructions, ID enhances model performance, particularly with the “opposite” noisy variant, which deviates most from the original input. Empirical results show ID’s effectiveness across multiple tasks, with notable improvements in prediction diversity. The approach requires no additional parameter updates, making it a practical tool for improving instruction-following in language models.

該研究調查了指令調整語言模型中看不見的任務泛化的挑戰。所提出的方法 ID 利用「噪音」指令的錨定效應來抵消固有的模型偏差。透過將預測與變更後的指令產生的預測進行對比,ID 可以增強模型效能,特別是對於「相反」的雜訊變體,該變體與原始輸入的偏差最大。實證結果顯示 ID 在多個任務中的有效性,並且預測多樣性顯著提高。該方法不需要額外的參數更新,使其成為改善語言模型指令追蹤的實用工具。

Check out the Paper. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter and join our Telegram Channel and LinkedIn Group. If you like our work, you will love our newsletter.

查看論文。這項研究的所有功勞都歸功於該計畫的研究人員。另外,不要忘記在 Twitter 上關注我們並加入我們的 Telegram 頻道和 LinkedIn 群組。如果您喜歡我們的工作,您一定會喜歡我們的時事通訊。

Don’t Forget to join our 50k+ ML SubReddit

不要忘記加入我們超過 50k 的 ML SubReddit

免責聲明:info@kdj.com

所提供的資訊並非交易建議。 kDJ.com對任何基於本文提供的資訊進行的投資不承擔任何責任。加密貨幣波動性較大,建議您充分研究後謹慎投資!

如果您認為本網站使用的內容侵犯了您的版權,請立即聯絡我們(info@kdj.com),我們將及時刪除。

-

- ZHOA Token:市場上以幣安創始人 CZ 命名的新型加密貨幣

- 2024-10-02 18:15:01

- 作為加密貨幣領域最知名的名字之一,CZ 現在已經以與他同名的數位資產加入市場。

-

-

-

- 豐田、松下和普利司通不再擔任國際奧委會贊助商

- 2024-10-02 18:15:01

- 國際奧委會正在向其三大重量級日本贊助商——豐田、松下和普利司通——揮手告別,因為他們決定終止合約。

-

-

-

-

-

- 隨著該行業即將迎來復興,目前必須購買的 3 種 DeFi 代幣投資

- 2024-10-02 18:15:01

- 加密貨幣領域最具變革性的創新之一是去中心化金融(DeFi),該系統允許用戶獲得借貸等金融服務